New York’s Highest Court Rules that Juries Should be Briefed on Problems with Cross-Racial Identification

12.18.17 By Innocence Staff

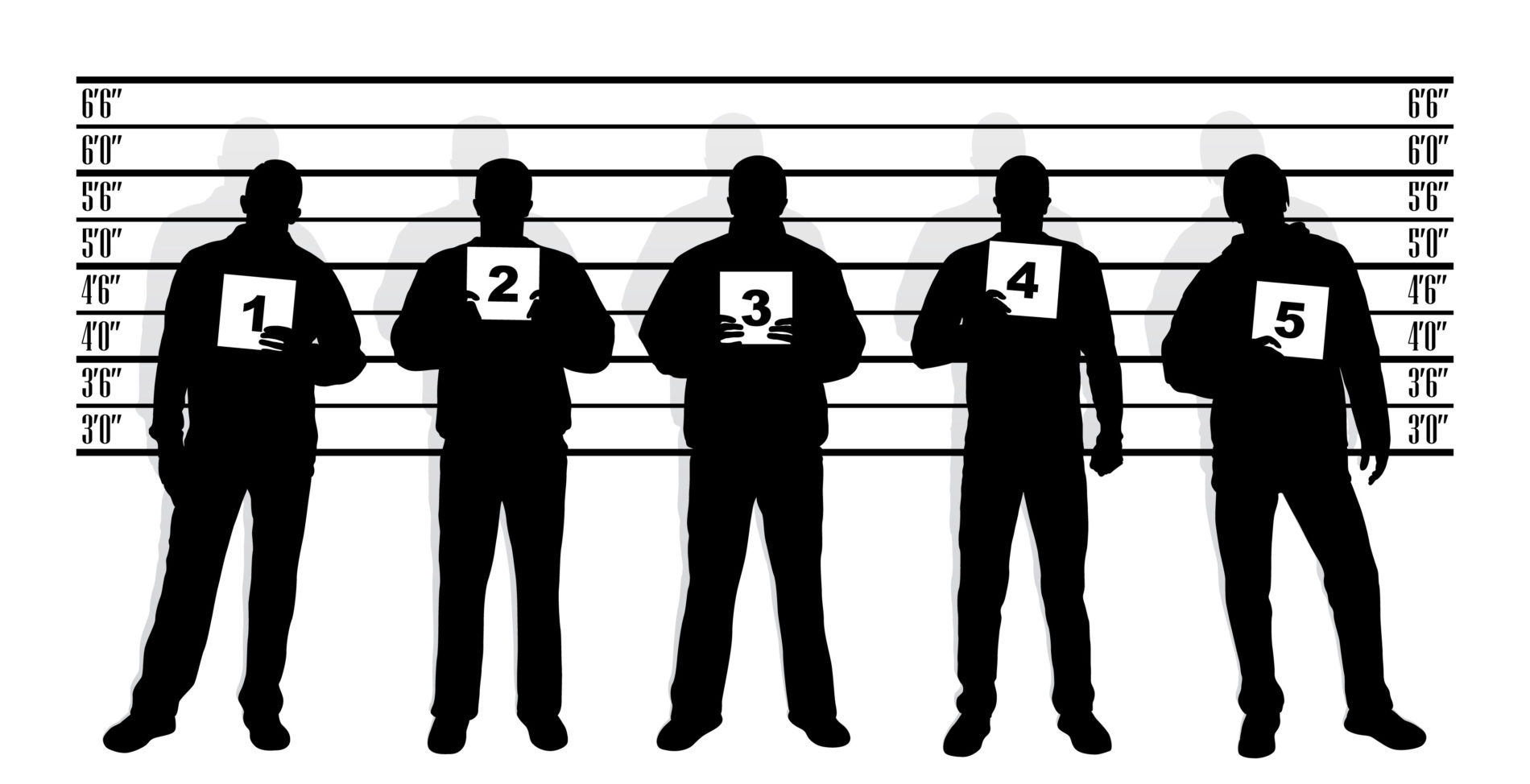

The New York State Court of Appeals issued a decision on Thursday requiring judges to instruct jurors about the unreliability of cross-racial eyewitness identifications in cases in which the defendant and the witness are or appear to be of different races.

Eyewitnesses often have trouble identifying people of a different race than their own. According to the New York Times, an analysis of 39 studies found that participants were one-and-a-half times more likely to falsely identify someone of a different race.

According to a brief filed in the case by the Innocence Project, of the 353 DNA exonerations nationwide, 70 percent involved eyewitness misidentification. Nearly half of those cases involved a defendant and a witness of different races.

Thursday’s decision stems from the case of Otis Boone, a black man who was convicted of a robbery based on the identification of two white men. The court ruled that, in Boone’s case, the jury should have been instructed about the problematic nature of cross-racial identification and ordered him a new trial.

Marne Lenox of the NAACP Legal Defense and Educational Fund told the Times that the decision is a step in the right direction for wrongful conviction reform.

“This decision helps level the playing field and prevent future wrongful convictions, especially of defendants of color, based on scientifically dubious cross-racial identifications,” Lenox told the Times.

Read the New York Times coverage here.

Related: Courtroom Identifications: Unreliable and Suggestive

Leave a Reply

Thank you for visiting us. You can learn more about how we consider cases here. Please avoid sharing any personal information in the comments below and join us in making this a hate-speech free and safe space for everyone.

January 24, 2018 at 5:06 pm

Amazing. So the reason why “all black people look the same” is because people lack experience to discriminate. Once they see the guy as nonwhite, their Deep Neural Network (https://en.m.wikipedia.org/wiki/Deep_learning) peters out and they lack the needed eigenvalues (face templates) to accurately identify the face.